AXIOM Project Background

"The most interesting aspect of the AXIOM cameras is that they have the potential to be the last camera you will ever need due to the fact that they're infinitely upgradeable… they won't eventually become technologically obsolete like most cameras." - Robert Hardy, No Film School.

An Introduction to The AXIOM Project's Beginnings

To take the beginning of apertus° back to inception, before all the individuals who are making it happen got started, and before the cameras as they stand today were put together, we need to acknowledge Oscar Spierenburg’s vision of bringing a camera with no manufactured restrictions to market.

One of the biggest problems in modern cinematography and film making is that essentially, the camera is the equivalent an air-plane’s black box. The path the data takes from the sensor to the camera's storage medium is typically shrouded in encryption and as a result, the filmmaker isn’t able to control its flow at all. He or she has effectively been blinded by closed proprietary technology embedded in the camera by manufacturers bent on achieving two things: that competing manufacturers aren’t able to exploit their designs and that consumers are locked into making further purchases when they want to upgrade their systems in the future. The apertus° AXIOM Beta will break these kinds of barriers.

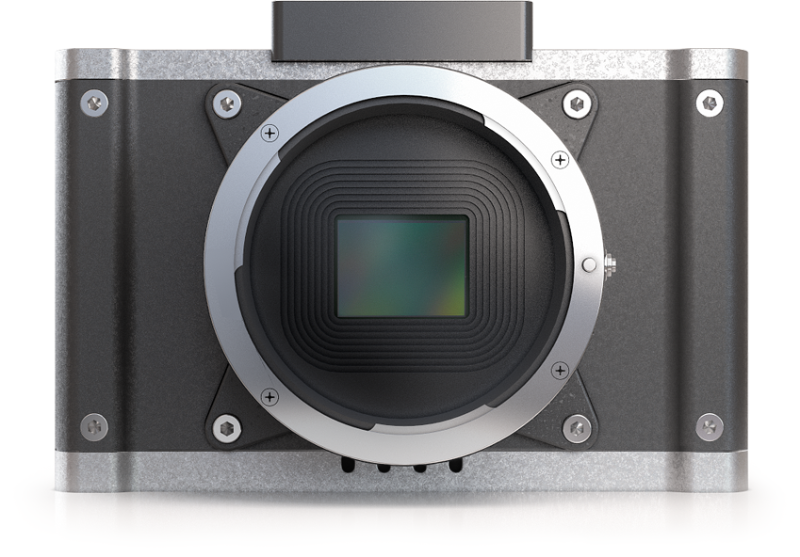

Above: AXIOM Beta early enclosure concept circa 2014.

The process was begun in a community forum where ideas could be contributed by people who shared the same vision as Oscar Spierenburg. Once development had reached a certain momentum the company was registered in 2012 and, after an extremely successful crowdfunding campaign, the team were further humbled when the AXIOM project was awarded an EU Horizon 2020 grant to assist with development. Now, almost ten years of development has culminated in the approaching launch of the AXIOM Beta Super35, 4K, compact cinema camera.

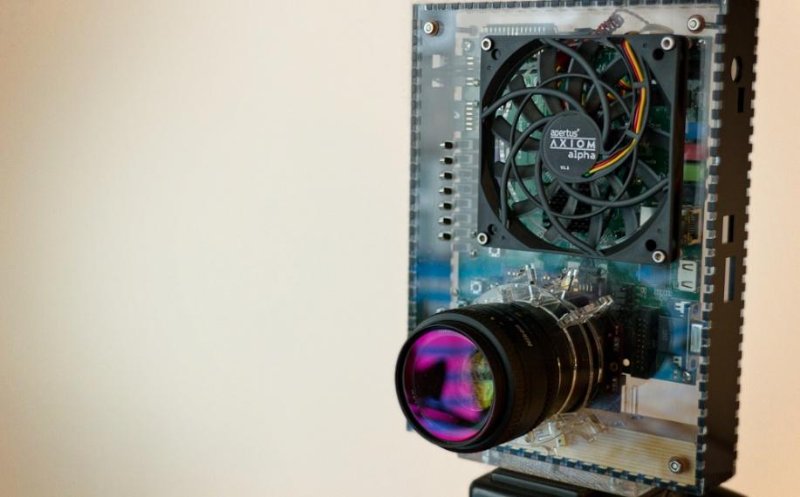

Above: AXIOM Alpha proof of concept prototype.

As a predecessor to the AXIOM Beta, the AXIOM Alpha prototype (an FPGA and CPU combination, pictured above) was used to gather feedback in typical shooting scenarios so that any resulting ideas could be incorporated into a future, more modular, kit version aimed at developers and early adopters. The intention was to reduce the components to the bare essentials required to shoot high quality, film-like, cinematic quality moving images.

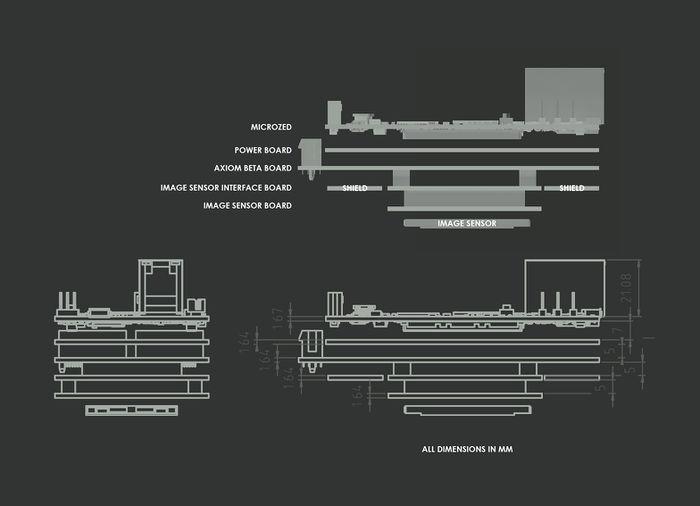

“The hardware design of the AXIOM Beta was kept simple at first, purely addressing problems discovered with the AXIOM Alpha. In the beginning we had intended to design the camera around a single board on top of an off-the-shelf FPGA/system-on-chip development board: the MicroZed™, but as a result of field testing and together with feedback gathered from the community we agreed to make it far more powerful by devising a more complex stack of boards where each layer is dedicated to specific functions.” - Sebastian Pichelhofer.

Above: AXIOM Beta electronic board stack.

Hardware

The AXIOM Beta comprises five printed circuit boards (PCBs):

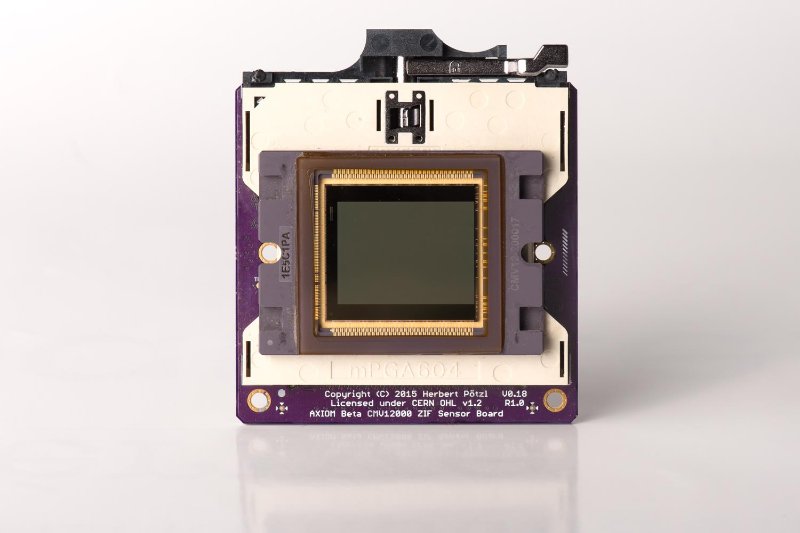

The image sensor board hosts the heart of every cinema camera - the (CMOS) image sensor. apertus° offered three different sensor options during the crowd funding campaign; Super35, Super16 and 4/3rds, and as almost 90% of the backers opted for the Super35 sensor its respective module was developed first.

Above: Temporary image sensor interface board - circa 2016 (Dedicated image sensor module was designed early 2017)

The Interface Board acts as a bridge between the image sensor board and the rest of the camera. It converts communication between the aforementioned components to a standard protocol so that almost any image sensor that becomes available in the future can be used with the AXIOM Beta without changing the rest of the hardware. If AXIOM users felt that 8K was in demand they would simply swap the sensor board for one capable of capturing images in the desired resolution.

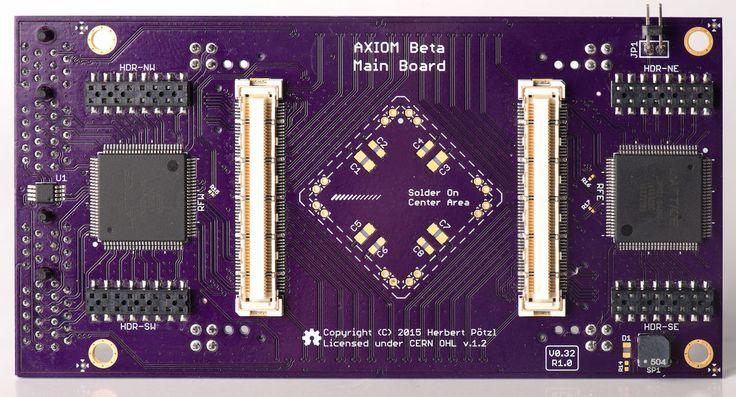

Above: AXIOM Beta Main Board circa 2016

The Main Board is the equivalent of a PC's motherboard. It hosts two external medium-speed shield connectors and two high-speed plugin module slot connectors. These act as a central switch for defining where data captured by the sensor and other interfaces gets routed to inside the hardware. In this regard, all specifics can be dynamically reconfigured in software opening up a lot of new possibilities such as adding shields for audio recording, genlock, timecode, remote control protocols or integrating new codecs and image processing inside the FPGA. In the centre of the main board a 'solder-on' area has been incorporated, this will for example host chips capable of sensing the camera's orientation and acceleration (the same chips used to stabilise quadcopters and track head movements in VR headsets). Being situated directly behind the image sensor centre means that these sensors are ideally positioned to supply data for image stabilisation or metadata about the camera’s orientation and movement during a shot.

- GSoC2017 - Google Summer of Code 2017 Application